Peer review is one of the cornerstones of modern scientific research. In this process, a study that has not yet been published is sent to several experts in the field, who assess its content, structure, and scientific merit. These reviewers may request revisions before publication, and in many cases they recommend rejection of the manuscript. Only once the authors have responded satisfactorily to the reviewers’ comments is the manuscript accepted for publication. The goal, of course, is to ensure that the published work meets accepted professional standards.

3 View gallery

Peer review can take many months. Platforms such as arXiv allow researchers to upload a draft of their paper before, or while, it is being considered by a journal

(Photo: Shutterstock)

Without such a mechanism, the label “research” could encompass unchecked findings and uncontrolled data, making it much harder for readers to distinguish genuine discoveries from unreliable claims. For many years, that was largely the reality. Even before peer review became standard, journals had screening and acceptance processes, but decisions rested almost entirely with editors. That left considerable room for bias, pressure, and conflicts of interest — risks that are never entirely absent from the publication process.

Today, peer review has the power to determine, through a careful and supposedly independent process, whether a study is accepted or rejected. As the main filter journals use before publishing scientific work, it is hard to imagine modern science retaining its credibility or substance without it.

Yet for all its importance, peer review has serious flaws. Winston Churchill once famously observed that democracy is “the worst form of government except for all those other forms that have been tried from time to time.” Many would say the same logic applies to peer review. The author of these lines, for example, once waited almost a year for a journal to grant final approval for an article. Many researchers have had similar experiences, leaving them to wonder why the process takes so long, why it is so draining, and whether it necessarily improves the original work — or simply reinforces the status and egos of reviewers who have the final say.

In response to these challenges, preprint platforms such as arXiv were created, allowing researchers to upload papers before they undergo peer review. In recent years, related initiatives have emerged as well. But efforts to expand this model across disciplines have run into difficulties. Nature reported in November 2025 arXiv tightened its moderation practice for review, survey and position papers in computer science, requiring such submissions to have already been accepted by a peer-reviewed journal or conference before being considered for the CS category. The change followed what arXiv described as an unmanageable influx of such manuscripts — a problem made more urgent by the ease with which large language models can now generate plausible but often non-original scientific text.

Against this backdrop, another attempt is emerging to bring some light to the end of the tunnel. It comes from people who are not merely complaining or suffering in silence, but taking practical steps to push scientific culture in a better direction — with the help, naturally, of artificial intelligence.

Portrait of the scientist as a young man

Meet Oded Rechavi of the Department of Neurobiology at Tel Aviv University. Rechavi is an unconventional researcher, in the best sense of the word. In recent years, he has challenged the traditional image of the solemn, tie-wearing scientist. His 145.7K followers on X enjoy a steady stream of memes and sharp observations about academia and research culture. But do not let that mislead you: Rechavi is a highly respected scientist. He completed a direct-track PhD in neuroscience at the age of 30 and, after a postdoctoral fellowship at Columbia University, joined the faculty at Tel Aviv University.

Over the years, Rechavi’s research has pushed the boundaries between the natural sciences and fields such as decision theory and behavioral science. In one study, he and his colleagues showed that the worm C. elegans can display behavior that appears “irrational,” linking it to specific neural-circuit constraints. Their findings suggest that seemingly irrational decisions may arise from the way compact nervous systems process information under limited resources. In another line of research, Rechavi provided evidence for a form of non-Mendelian, epigenetic inheritance sometimes described as “Lamarckian-like.” In this case, acquired responses do not alter the DNA sequence itself, but can be transmitted across generations through small RNA molecules that regulate gene expression.

Agent of change

Although his scientific work has attracted attention in both academia and the media, Rechavi has remained troubled by scientific culture itself: its slow routines, conservative conventions, and norms that can seem almost arbitrary in a modern context — including, for example, which reviewers a submitted paper happens to “fall” to. He has published several opinion pieces on different aspects of scientific culture, and in an interview with Cell, he spoke critically about peer review, a process that appears to have frustrated him more than once. He has never argued, of course, that peer review is fundamentally flawed. Rather, he believes it needs to be updated and adapted to the modern era.

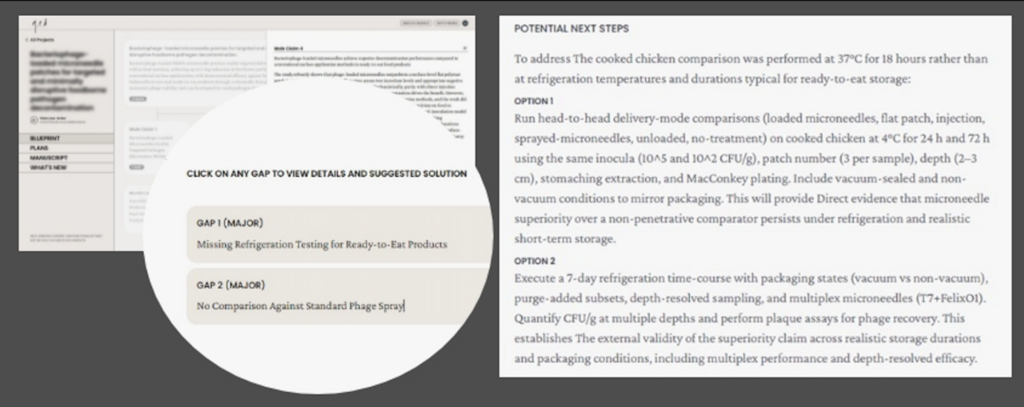

3 View gallery

q.e.d can identify gaps in research and suggest possible solutions. Example of the platform’s output after evaluating a study recently published in a respected scientific journal. Source: Screenshot from a platform test conducted by the Davidson Institute of Science Education’s science communication team, approved for publication by Oded Rechavi

That concern eventually led to q.e.d Science, a company Rechavi founded with colleagues after working for about a year and a half with a team of programmers, scientists and artificial-intelligence experts. The company develops an AI tool that provides critical feedback on scientific manuscripts before they enter formal peer review. Its name comes from the Latin phrase quod erat demonstrandum, meaning “that which was to be demonstrated. Q.E.D. offers researchers a way to test and strengthen their claims earlier without compromising the quality of scientific papers.

The platform is based on a large language model, with an interface similar to a chatbot such as ChatGPT. But unlike a general-purpose chatbot, q.e.d is designed specifically for scientific critique, to support what researchers call “critical thinking.” According to its developers, the system analyzes the structure of a manuscript, identifies its main claims and innovations, points out gaps in the findings or logic, and highlights the work’s strengths and weaknesses. To build the system, the developers used open repositories of peer reviews and published papers, along with manual annotations by experts who marked gaps in articles specifically for the platform.

Researchers can submit a draft of a paper and receive structured feedback within minutes. They can also use the tool to critically evaluate papers by others. Rechavi believes this could help researchers identify flaws that exist in any research project, address them earlier, and reduce at least some of the criticism they might later receive from reviewers. In the broader vision, researchers could eventually submit a paper to a journal together with a q.e.d evaluation, helping editors decide more easily whether the paper should be sent out for review.

No room for small dreams

In an interview with the Davidson Institute website, Rechavi explains what led him to found the company: “I really enjoy being a scientist, and I think academia is an amazing place. It gives you a great deal of freedom and the opportunity to be creative. But the scientific publishing industry, and the way science evaluates itself, is not good — or at least could be much better. This has always mattered to me, and most of what I talk about on social media is connected to it. In the current state of the academic system, there is no doubt that young researchers are harmed. Unfortunately, one of the factors that most affects the peer review you receive is who you know and how established you are in the field.”

3 View gallery

The new platform may eventually help reduce biases related to seniority or academic status, giving researchers a more equal opportunity to improve their work before submission. Portrait of a researcher sitting and refreshing the page while waiting for the reviewers’ decision

( Source: Liat Peli using Gemini)

This raises an obvious question: how can we ensure that AI-generated criticism is accurate and substantive, and that it does not replace careful human judgment? “We take these questions very seriously,” he says. “The q.e.d team includes several scientists who are working to develop the product as a focused, reasoned force multiplier for human critical thinking — and of course, we neither want nor aim to replace it.”

That distinction is crucial. There is a major difference between an author using AI to improve their own manuscript before submission and a reviewer uploading a confidential manuscript to a generic AI tool during formal peer review. The first is increasingly being explored as a form of author-side scientific self-checking; the second raises serious questions about confidentiality, intellectual property, data protection and accountability. Major publishers including Nature Portfolio, Elsevier and Springer Nature now warn reviewers not to upload unpublished manuscripts into ordinary generative-AI tools, and require or request transparency when AI has assisted any part of the review process.

For all its promise, using artificial intelligence in peer review also raises concerns that must be taken seriously. A recent column in Nature outlined several possible risks, including threats to manuscript confidentiality, the difficulty AI may have in recognizing genuine scientific innovation, and biases rooted in the specific datasets on which the system was trained. There is also the danger of relying too heavily on AI tools, which could weaken human critical judgment — a concern often raised outside academia as well. Another column suggested that AI tools should be used as an aid to human reasoning rather than a substitute for it, subject to close scrutiny and oversight, and that potential flaws should be identified early, before they do more harm than good.

Should AI also play a role in evaluating competitive research grants or admissions committee decisions? According to Rechavi, “Artificial intelligence that is trained ‘properly’ can serve as a significant force multiplier for human judgment and critical thinking, but I have no intention of taking humans out of the loop. The final decision should remain in the hands of human beings, for human beings. I hope this will happen only after the scientific community has done very serious work to make sure that AI performs better in every respect. It should not simply be more thorough; we will also need to see that AI can identify original, promising research even when it is completely different from anything familiar.”

A hopeful, cautious future

The q.e.d platform was opened to the research community after a limited pilot involving Rechavi’s colleagues. Since then, the idea behind it has begun to move beyond a small experiment: bioRxiv and openRxiv have announced a pilot allowing authors to send their own preprints to q.e.d Science for AI-generated manuscript assessment. The platform grew out of the life sciences, and much of its public testing and early evidence still comes from that field. At the same time, q.e.d now presents itself as a broader tool for evaluating scientific reasoning, and Rechavi and his team hope to expand its use to other areas of the natural sciences and engineering. In the future, he adds, he would like the system to address claims in any field where scientific validity can be tested, including many areas of the social sciences and humanities.

The tool has considerable potential, but its real impact cannot yet be known. Researchers will need to evaluate its performance over time, across different disciplines and different kinds of manuscripts. They will also need to ask not only whether the feedback is fast or impressive, but whether it is useful, fair, secure and capable of improving scientific work without weakening human judgment. In an era when AI can generate scientific-looking text with unsettling ease, tools that promise to improve review will themselves need to be reviewed carefully.

In conclusion, I asked Rechavi what he believes should be the next major change in scientific culture, assuming the venture succeeds. “Ultimately, I want two things. The first is to identify and publish, as quickly as possible, the best, most original, and most valid science above the endless noise [referring to the flood of research literature — Y.B.]. The second is for science to be an enjoyable profession, and for talented people to choose it as a way of life.”

Aspirations like these deserve to be welcomed — with curiosity, but also with caution. q.e.d and similar tools may not end the Sisyphean labor of peer review, and they certainly should not replace the responsibility of human reviewers and editors. But if they can help researchers identify weaknesses earlier, sharpen their claims and reduce some of the unnecessary friction in scientific publishing, they may also help ensure that important findings are not delayed unnecessarily, making it easier for meaningful results to be published sooner and help good science reach readers faster.