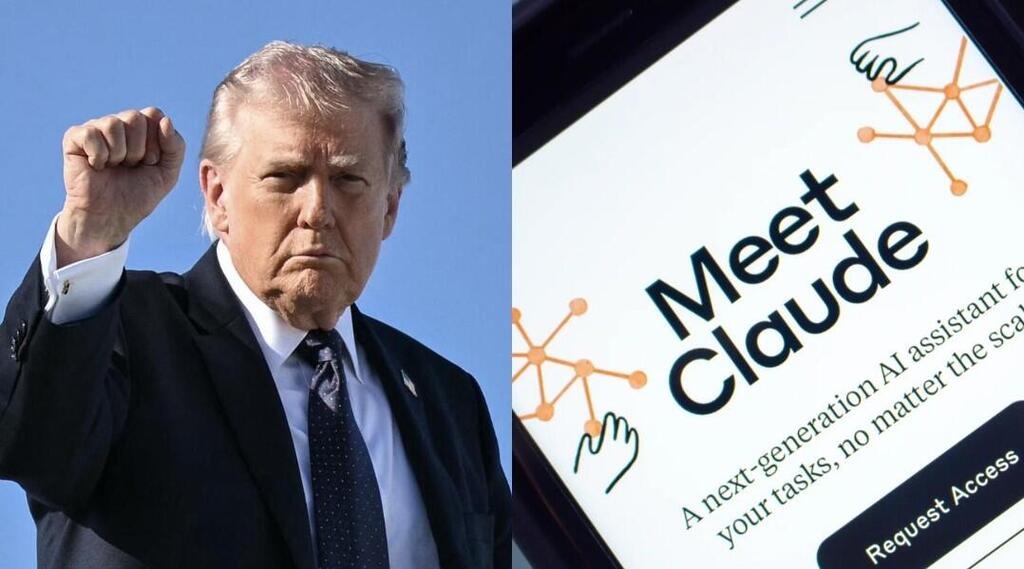

Just hours after President Donald Trump declared artificial intelligence company Anthropic a “national security risk,” its AI model Claude reportedly played a central role in a large-scale US airstrike on Iran, according to a new report in US media.

On Friday, Trump signed an executive order instructing all federal agencies to immediately halt use of Anthropic’s AI models. The move followed the company’s refusal to grant the military what officials described as unrestricted use of its technology for any lawful purpose, insisting instead on ethical limitations.

Yet while the ink on the order was still fresh, US Air Force jets were already en route to targets in Iran. According to sources familiar with the matter, US Central Command systems used Claude to conduct intelligence assessments, identify targets and simulate battlefield scenarios in real time.

The dispute between the Trump administration and Anthropic, considered one of the world’s three leading AI firms alongside OpenAI and Google, extends beyond business considerations. Anthropic promotes what it calls “Constitutional AI,” embedding democratic and ethical principles into its models.

During negotiations over a major Pentagon contract, the company reportedly refused to allow its systems to be used for fully autonomous lethal decisions without human oversight or for mass surveillance. Administration officials viewed the stance as defiance. Defense Secretary Pete Hegseth described the company as a supply chain risk, a designation typically reserved for hostile foreign firms such as Huawei.

As Anthropic was pushed aside, competitors moved quickly. Reports say OpenAI and Elon Musk’s xAI recently signed new agreements with the administration for use in classified environments. Claude is widely regarded as highly accurate in analyzing complex texts and code but is constrained by ethical safeguards.

By contrast, xAI’s Grok is marketed as offering greater flexibility with fewer ideological constraints. OpenAI has also lifted its blanket prohibition on military use of its GPT-4o model as it seeks to become a primary infrastructure provider for the US Defense Department.

Experts caution that separating the military from Claude would amount to “open-heart surgery.” The model is deeply integrated into systems used by data analytics company Palantir, which has supported US security operations. The transition away from Claude is expected to take at least six months, helping explain why the administration continues to use a tool it has publicly criticized.

The use of artificial intelligence on the battlefield is not new, but its scale in 2026 is unprecedented. The US military employed the DART logistics system during the Gulf War in the 1990s, and the modern shift accelerated in 2017 with Project Maven, designed to identify objects in video footage.

In Israel, systems such as “Habsora” and “Lavender,” widely reported on during fighting in Gaza, similarly process large volumes of data to generate target banks. A key difference is that Israeli systems are largely developed internally by military technology units, while the US relies heavily on private commercial firms, creating friction between policymakers and tech companies.

As Europe moves to tighten regulation of AI and China deploys language models for influence operations, the United States is undergoing a rapid technological transformation. The central question is no longer whether AI will shape warfare, but who determines the values embedded in the digital systems that guide life-and-death decisions.