If the IDF’ operations in Gaza were the pilot, then Operation Roaring Lion, or Epic Fury in the American version, may be described as the first full-scale artificial intelligence war in history.

Until recently, AI served mainly as a tool for data analysis. In the current war, it has moved into the driver’s seat. Both militaries are making extensive use of the emerging technology across a wide range of areas: planning the vast scope of attacks on Iranian targets, coordinating the precise presence of hundreds of aircraft simultaneously in the air, making rapid decisions on how to intercept ballistic missiles and more.

8 View gallery

Destruction in Tehran

(Photo: Majid Asgaripour/WANA (West Asia News Agency) via REUTERS )

A dramatic shift

Details are, of course, wrapped in heavy secrecy, but some are gradually emerging. These are not Terminator-style attack robots, but decision-support systems capable of processing quantities of data that intelligence analysts could not digest even over decades, allowing them to focus on the information that truly matters.

The British newspaper The Guardian wrote this week that the astonishing pace at which targets are generated, approved and carried out in the current war is “faster than the speed of thought.” The shift represents a dramatic transformation in modern warfare doctrine, making it far more precise, economical and lethal.

Without doubt, AI significantly shortens the time between the initial identification of a target and its destruction. For comparison: during World War II, the time between collecting intelligence — such as aerial reconnaissance — and carrying out a bombing mission could take up to six months.

Looking at the more recent past, The Times of London reported that identifying targets during the U.S. invasion of Iraq required an intelligence unit of 2,000 soldiers. In the current operation in Iran, the same task required just 20.

U.S. Central Command, or CENTCOM, is applying “Project Maven,” announced in 2017, and integrating the use of large language models — primarily Anthropic’s Claude and, as of this week, models from OpenAI and xAI — to summarize raw intelligence from hundreds or thousands of sources simultaneously and produce operational directives.

The system scans thousands of hours of drone footage, identifies objects such as vehicles, people and types of weapons — terabytes of data — and flags them for intelligence operators. Software from Palantir helps the U.S. military, and apparently other Western militaries as well, make decisions and select targets using this information.

In August 2023, U.S. Deputy Defense Secretary Kathleen Hicks also unveiled the Pentagon’s Replicator program, which deploys thousands of autonomous drones, vessels and vehicles operating independently without direct human control over each individual platform.

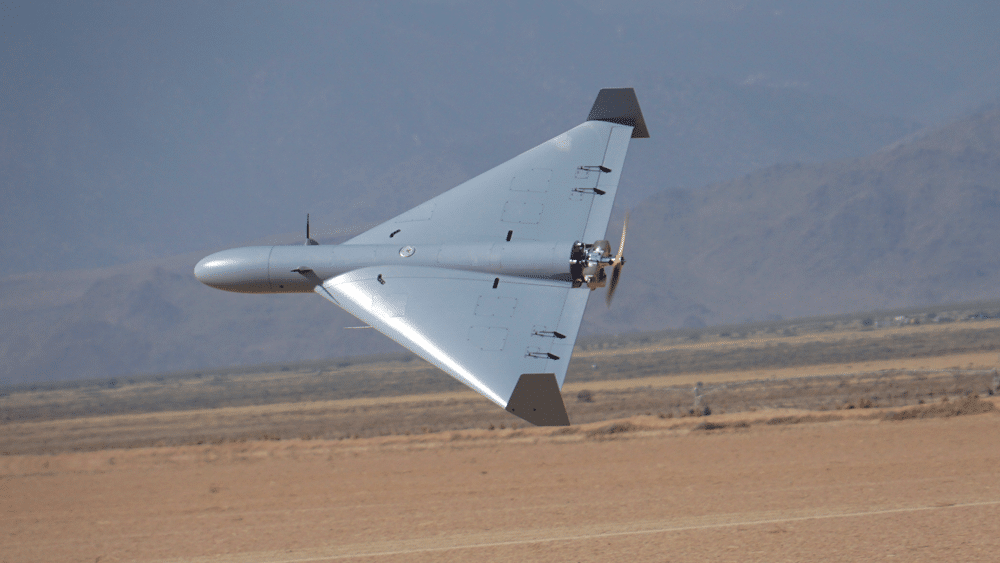

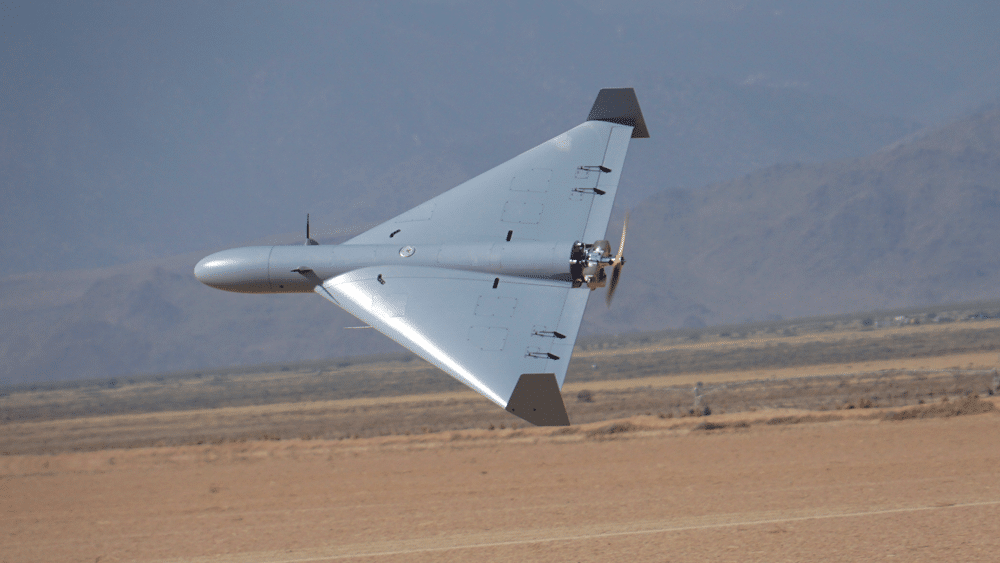

At least some of them are likely participating in the campaign against Iran. Over the past two weeks, the United States has also deployed to the Middle East a unit operating swarms of LUCAS unmanned aerial vehicles — relatively inexpensive drones, about $35,000 per unit, produced by Arizona-based Spekter-Works — that operate fully autonomously. They can communicate with one another in the air, divide targets and carry out suicide attacks on Iranian radar systems without guidance from the ground.

A memo sent earlier this year by U.S. Defense Secretary Pete Hegseth to senior military officials turned the issue into a directive. “I am ordering the Department of Defense,” he wrote, “to accelerate U.S. military dominance in artificial intelligence by becoming an ‘AI-first’ fighting force across all domains, from front to rear.”

8 View gallery

A LUCAS or FLM-136 loitering attack drone. US Central Command said it had deployed the system in the Middle East for the first time

Israel also in the picture

The United States is not alone. Israel, according to foreign reports, also used AI systems this week known as Habsora (“The Gospel”) and Lavender, which helped identify and locate targets in Gaza over the past two years.

According to an investigation by the Israeli outlet +972 Magazine, widely cited by The Guardian in 2023, the Gospel system automatically generates recommendations for striking buildings and facilities — such as nuclear sites, oil installations and Revolutionary Guard headquarters. It processes and cross-references satellite imagery, communications data and human intelligence gathered from thousands of sources to identify structures and sites used by militant operatives.

If the Gospel focuses on buildings, Lavender, according to those reports, focuses on people. It is an AI-based database that scans and processes vast amounts of surveillance data, including social connections and location history, to flag potential targets.

The model assigns each individual a score between 1 and 100. When the score passes a certain threshold, Lavender marks the person as a military target. Another system, known as “Where’s Daddy?,” completes the process by tracking those flagged and alerting operators in real time when they enter a specific residential building.

At the same time, the Financial Times reported this week that Israel hacked into nearly all traffic cameras in Tehran, with their footage transmitted to servers in Tel Aviv. From that point, it was possible to automatically track the movements of bodyguards of senior officials who arrived at the house where Iran’s supreme leader, Ayatollah Ali Khamenei, was killed last Saturday. AI-based algorithms analyzed details such as their duty schedules and commuting routes.

According to the +972 Magazine investigation, AI systems also helped Israel analyze cellular and communications networks of Iran’s senior leadership, leading to the targeted killing of Khamenei and dozens of senior Iranian officials in the heart of Tehran.

Only last September Microsoft blocked Israel’s Unit 8200 — the military’s elite signals intelligence unit — from using its Azure cloud services after discovering they had been used to store and process data for a mass surveillance system tracking Palestinians in Gaza and the West Bank. The only way to extract meaningful insights from such enormous quantities of raw data is through artificial intelligence.

Operation Roaring Lion is also the first in which the IDF’s new AI division, known as “Bina,” is operating. Established only a few months ago and headed by a brigadier general, the division consolidated most of the military units previously working in the field.

The goal, according to Maj. Gen. Aviad Dagan, head of the IDF’s C4I and Cyber Defense Directorate, is “to turn one tank into 100 tanks and one soldier into 100 soldiers.”

AI enters the defensive arena

Israeli civilians also received proof this week of the effectiveness of AI systems in protecting lives.

When multiple types of missiles are launched toward Israel from different sites, AI quickly performs what is known as “sensor fusion.” In simple terms, it receives data from radars and sensitive sensors at sea, in the air and on the ground — Israeli and American — and decides within milliseconds which interceptor to launch against which target to maximize the chances of a successful interception.

At the same time, another system automatically generates precise intelligence allowing forces to rapidly target Iranian missile launchers seconds after they are detected.

In the IDF, responsibility for deciphering the chaos in the skies — identifying objects, tracking missiles in flight and managing interceptions — lies with Ofek 324, a technological unit of the Israeli Air Force. The precise algorithmic processing that produces a complete aerial picture is carried out by two computer systems developed by the unit.

One, the older system, provides a “sky picture” of every aircraft flying over or near Israeli airspace. The second produces a real-time picture of ballistic threats heading toward Israel. Alongside them operates the command-and-control system that orchestrates the entire network.

The complex algorithms are handled by a dedicated AI team within Ofek. Among other things, it has developed standalone applications used, for example, to plan safe flight routes for strike aircraft and to assess bomb damage.

The same defense system underpins Israel’s advanced civilian warning system. Using extensive mathematical algorithms, it can predict where and when a missile heading toward Israel is expected to strike and issue alerts only in areas actually at risk.

As revealed this week by the chairman of the U.S. Joint Chiefs of Staff, Gen. Dan Caine, much of the war is also being fought behind the scenes through massive cyberwarfare. During the earlier 12-day war, foreign technology sites reported that groups linked to Israel used AI to cripple Iran’s banking system. AI was used to automatically scan for security vulnerabilities and inject disruptive malicious code without human intervention.

A testing ground for the US and China

The confrontation with Iran has effectively become a live testing ground for Western AI technology. But China is also preparing for a future war in which victory will not belong to the side with the most tanks or aircraft, but to the one with the fastest and most effective algorithms.

For Beijing, AI is not merely a support tool but openly the core of modern military strategy. China is considered a global leader in swarm technology. Its state-owned company CETC has developed the ability to launch swarms of more than 200 drones that communicate with one another without human involvement, divide reconnaissance and strike missions among themselves and even compensate for the loss of individual units.

8 View gallery

A drone displayed during a military parade in Beijing

(Photo: REUTERS/Maxim Shemetov)

Unlike Western AI systems, China does not require a “human in the loop” in the decision-making process. A swarm could theoretically make a collective attack decision based on autonomous target identification.

More broadly, drones have become the most popular weapon in violent conflicts around the globe over the past decade. Among the AI technologies that transformed the war in Ukraine was Skynode, developed by the company Auterion — an AI navigation system installed on drones that allows them to operate without GPS. The system enables Ukrainian drones to navigate and identify targets even when Russia jams GPS signals by visually matching terrain with satellite maps stored in memory.

The clash with Anthropic

By sheer coincidence, the war with Iran erupted in the middle of a sharp confrontation between the U.S. Defense Department and the White House on one side and the AI company Anthropic on the other. Anthropic produces the Claude model used by the U.S. military during the operation.

For decades, the division of labor between Silicon Valley and the Pentagon was straightforward: the government paid and companies provided software to the military without asking too many questions, and everyone prospered. In the age of artificial intelligence, however, the product is no longer merely a spreadsheet — it is the brain behind the weapon itself, raising complex ethical dilemmas.

Anthropic has consistently positioned itself as particularly strict about safety and ethics. CEO and founder Dario Amodei frequently warns about the dangers of uncontrolled AI use. The company refuses to rely solely on a declaration that the military will use its products for “any lawful purpose.”

Anthropic insists its models not be used in two areas: mass civilian surveillance and autonomous weapons. “I deeply believe,” Amodei said, “in the existential importance of using AI to defend the United States and other democracies and defeat our enemies, but today AI systems simply are not reliable enough to operate fully autonomous weapons.”

With the understanding that these rules were acceptable to the government, Anthropic partnered with Palantir and signed a contract with the Pentagon last summer worth about $200 million. Claude was also the first model integrated into the U.S. military’s classified networks.

But after the kidnapping of Venezuelan leader Nicolás Maduro, officials at the Defense Department asked the company about how Claude had been used in the operation. The Pentagon responded sharply, arguing that the two areas Anthropic insists on restricting contain many “gray zones,” and that it is not always easy to determine what falls within them.

Negotiations with the company collapsed. Hegseth even declared Anthropic “a national security risk,” a label usually reserved for companies from hostile countries such as China. Last Friday, President Donald Trump signed an order instructing all federal agencies to immediately stop using its AI models.

Yet in practice, as noted, Anthropic’s Claude still played a central role this week in planning the strikes in Iran. On Tuesday, competitor OpenAI entered the picture, saying it had imposed similar conditions on the Defense Department’s use of its models and that they had been accepted. According to reports, Google is also negotiating with the Pentagon to integrate its Gemini AI model into a classified military system.

Is Anthropic correct in warning about the ethical and moral dangers of AI when it becomes a weapon? Can an algorithm truly distinguish between a militant holding an RPG and a child holding a toy? And who bears responsibility in the case of a mistaken strike — the programmer who wrote the code, the commander who approved the system or the machine itself?

In the IDF — as in NATO — the answer is unequivocal: significant decisions, especially those involving human life, will ultimately always be made by a human commander. None of the military’s AI systems makes operational decisions on its own; they are designed only for data analysis and processing.

Even when a target is selected based on the data, it is individually reviewed by senior officers. “There will never be a situation in which artificial intelligence makes decisions for us,” the military says. Officials also argue that AI actually reduces harm to civilians by enabling greater precision.

Yet the technological race has its own dynamics. Companies such as Palantir and Anduril, which work extensively with the Pentagon, NATO and Ukraine, repeatedly argue that if the United States imposes strict limitations on itself while China develops autonomous systems 100 times faster, Washington could lose the race.

In their view, military AI development is the only way for the West to defeat China and Russia in the technological arms race. “If we are not there with lethal AI,” they warn, “our enemies will be.”

Concerns about dependence on tech giants

Precisely because AI now plays such a central role on the battlefield, Israeli officials worry about the day when international technology giants — which supply both the advanced models and the massive server farms required to run them — might decide to “pull the plug.”

Project Nimbus, the $1.2 billion contract signed by Google and Amazon with the Israeli government to provide large-scale cloud services, has already become a symbol of internal protests within both companies over cooperation with the Israeli military. Within Israel’s defense establishment, officials increasingly recognize the danger of “putting all the eggs in one basket.” Relying solely on public cloud services and paid AI models poses a strategic risk.

That is why Nimbus contracts included legal “point-of-no-return” clauses preventing Google or Amazon from terminating their services due to political pressure or policy decisions. The fact that the servers are physically located in Israel and managed by local teams also offers some reassurance.

To overcome restrictions such as those imposed by Anthropic, however, the leading solution is the adoption of open-source AI models, such as Meta’s Llama. Such models can run on IDF servers without internet connectivity and can be trained on classified military data without the information leaking outside.

At the same time, Israel’s Defense Ministry is investing heavily in developing AI technologies that do not depend on Silicon Valley. The Directorate of Defense Research and Development encourages early-stage startups in military AI. Companies such as Elbit Systems, Rafael Advanced Defense Systems and Israel Aerospace Industries have established large internal AI divisions. Instead of buying ready-made solutions from Microsoft, they are developing “local” AI embedded directly in missiles, drones or tanks.

One challenge Israel cannot solve on its own is computer chips. The world’s most advanced AI models run on specialized processors produced by Nvidia. Despite the fact that much of this technology is developed in Israel, if a future U.S. administration were to impose an embargo on exporting such chips, Israel’s defense establishment could find itself facing a serious problem.