NVIDIA announced this week at its annual GTC artificial intelligence conference that its Vera Rubin platform, aimed at powering the next generation of so-called agentic AI, has entered commercial production with seven new chips designed to expand the scale of the world’s largest AI factories.

The platform combines the NVIDIA Vera central processing unit, or CPU, the NVIDIA Rubin graphics processing unit, or GPU, the NVIDIA NVLink 6 switch, NVIDIA ConnectX-9 SuperNIC and NVIDIA BlueField-4 data processing unit, or DPU, chips, the NVIDIA Spectrum-6 switch and the new NVIDIA Groq 3 LPU chip.

NVIDIA said the chips were designed to work together as a large-scale AI supercomputer capable of handling every stage of AI development, from massive pre-training to post-training and test-time scaling, as well as real-time inference for AI agents.

“Vera Rubin is an enormous generational leap — seven groundbreaking chips, five server racks and one giant supercomputer — built to drive every phase of AI,” NVIDIA founder and CEO Jensen Huang said. “The tipping point for AI agents is here, and Vera Rubin is kicking off one of the largest infrastructure build-outs in history.”

Dario Amodei, Anthropic’s co-founder and CEO, said demand for increasingly complex reasoning tasks, agent-based workflows and critical decision-making required infrastructure that could keep pace.

“NVIDIA’s Vera Rubin platform gives us the compute, communication and system design capabilities that let us continue delivering results while advancing the safety and reliability our customers depend on,” Amodei said.

OpenAI CEO Sam Altman said NVIDIA’s infrastructure had been central to pushing the boundaries of AI. “With NVIDIA Vera Rubin, we will run more powerful models and agents at enormous scale and deliver faster, more reliable systems to hundreds of millions of people,” Altman said.

A central element of the new architecture is what NVIDIA described as its largest POD, a supercomputer made up of multiple server racks developed specifically for AI and designed to operate as one large, coherent system through close integration of compute, networking and storage.

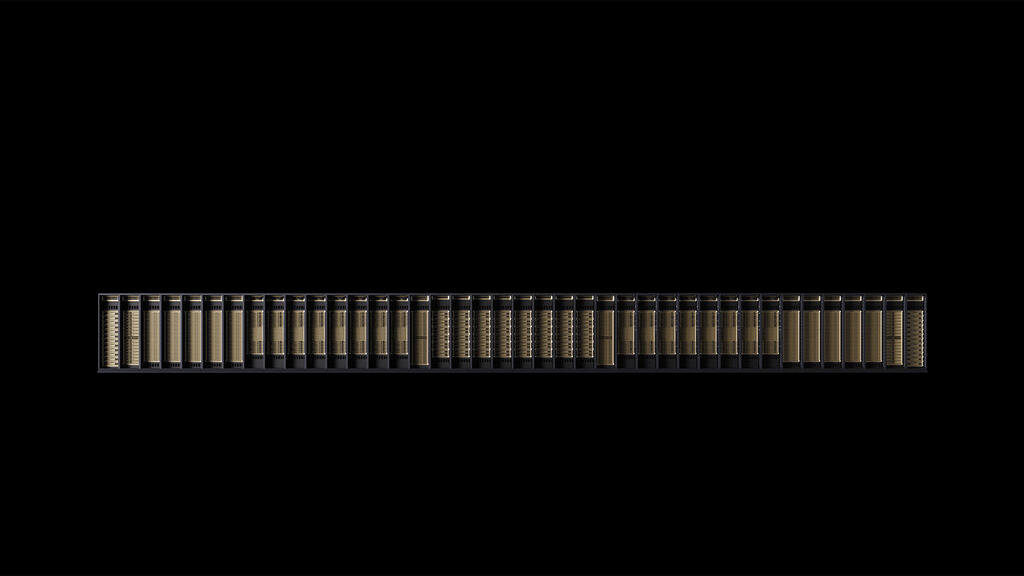

At the core of that system is the NVIDIA Vera Rubin NVL72 rack, which links 72 Rubin GPUs and 36 Vera CPUs using NVLink 6 switches and ConnectX-9 SuperNICs, with BlueField-4 DPUs supporting communications.

NVIDIA said the Vera Rubin NVL72 racks could train large mixture-of-experts models using one-quarter the number of GPUs required by its Blackwell platform, while delivering up to 10 times the inference throughput per watt at one-tenth the cost per token.

The company said the system, designed for very large AI factories, can be scaled using NVIDIA Quantum-X800 InfiniBand and Spectrum-X Ethernet networking components to maintain high computing utilization across massive GPU clusters while reducing training time and overall ownership costs.

NVIDIA also introduced the Vera CPU, which it described as its first central processor developed specifically for the era of agentic AI and reinforcement learning.

According to the company, the Vera CPU delivers twice the efficiency and runs 50% faster than traditional CPUs at rack scale for data processing, AI training and AI-agent inference.

NVIDIA said that as AI agents and reasoning workloads become more advanced, scale, performance and costs increasingly depend on infrastructure that supports models, schedules tasks, runs tools and code, validates results and interacts with data. When CPUs cannot keep up with those workloads, the company said, accelerators sit idle and limit the output of AI factories.

The NVIDIA Vera CPU rack includes up to 256 Vera CPUs capable of running as many as 22,500 independent AI agent or reinforcement-learning environments, the company said. Across configurations, Vera incorporates NVIDIA ConnectX SuperNIC cards and NVIDIA BlueField processors for accelerated networking, storage tasks and security while allowing customers to continue using a unified software system across NVIDIA platforms.

NVIDIA also unveiled the NVIDIA Groq 3 LPX rack, which it described as a milestone in accelerated computing.

The company said LPX and Vera Rubin were designed to meet the needs of agent-based systems, including low latency and long context, and together can provide up to 35 times more inference throughput per megawatt. NVIDIA said the systems can also increase the potential revenue of trillion-parameter models by as much as 10 times.

At large scale, fleets of LPU chips operate as a single large processor for faster inference, the company said. An LPX rack with 256 LPU processors includes 128 gigabytes of on-chip SRAM and 640 terabytes per second of rack-scale communication bandwidth.

When deployed with a Vera Rubin NVL72 system, Rubin GPUs and LPU chips jointly process every layer of an AI model for each token to increase throughput, NVIDIA said.

The company said the LPX architecture integrates with Vera Rubin to support trillion-parameter models and context windows of millions of tokens while improving power, memory and compute efficiency. NVIDIA said the system’s performance could raise inference capacity and increase revenue opportunities for AI providers. LPX racks use liquid cooling and are intended to integrate with Vera Rubin-based AI factories. They are expected to become available in the second half of the year.

At the conference, NVIDIA also announced BlueField-4 STX, a new reference architecture intended to upgrade storage infrastructure for workloads created by multiple AI agents.

NVIDIA said AI agents require real-time access to data and contextual working memory to sustain fast, coherent conversations and tasks. As context windows grow, the company said, traditional data and storage architectures can slow AI inference and reduce GPU utilization.

According to NVIDIA, STX allows storage providers to build infrastructure that keeps data close and accessible so AI factories powering agents can deliver higher throughput and faster response times in inference, training and analytics tasks.

The company said STX provides as much as five times higher token throughput, four times greater energy efficiency and twice the data ingestion speed compared with traditional CPU-based high-performance storage architectures.

NVIDIA also introduced the Spectrum-6 SPX Ethernet rack, designed to accelerate data traffic in AI factories. The rack will be available in two configurations, one with Spectrum-X Ethernet switches and one with NVIDIA Quantum-X800 InfiniBand switches, and is intended to provide low-latency, high-throughput communication between server racks at scale.

NVIDIA said products based on the Vera Rubin platform will become available beginning in the second half of the year through partners including major cloud providers Amazon Web Services, Google Cloud, Microsoft Azure and Oracle Cloud Infrastructure, along with NVIDIA cloud partners CoreWeave, Crusoe, Lambda, Nebius, Nscale and Together AI.

Global system manufacturers including Cisco, Dell Technologies, Hewlett Packard Enterprise, Lenovo and Supermicro are expected to offer a range of servers based on Vera Rubin products, along with Aivres, ASUS, Foxconn, GIGABYTE, Inventec, Pegatron, Quanta Cloud Technology, Wistron and Wiwynn.

NVIDIA said leading AI labs and model developers, including Anthropic, Meta, Mistral AI and OpenAI, are evaluating the Vera Rubin platform for training large advanced AI models and running multimodal systems with long context at lower latency and cost than previous GPU generations.